🧑💻 Developer-First #188 - The Guardrails Problem

AI is accelerating software delivery, but organisations are still learning how to balance speed, safety, and system context

Hello friend,

Across this week’s stories, a pattern begins to emerge.

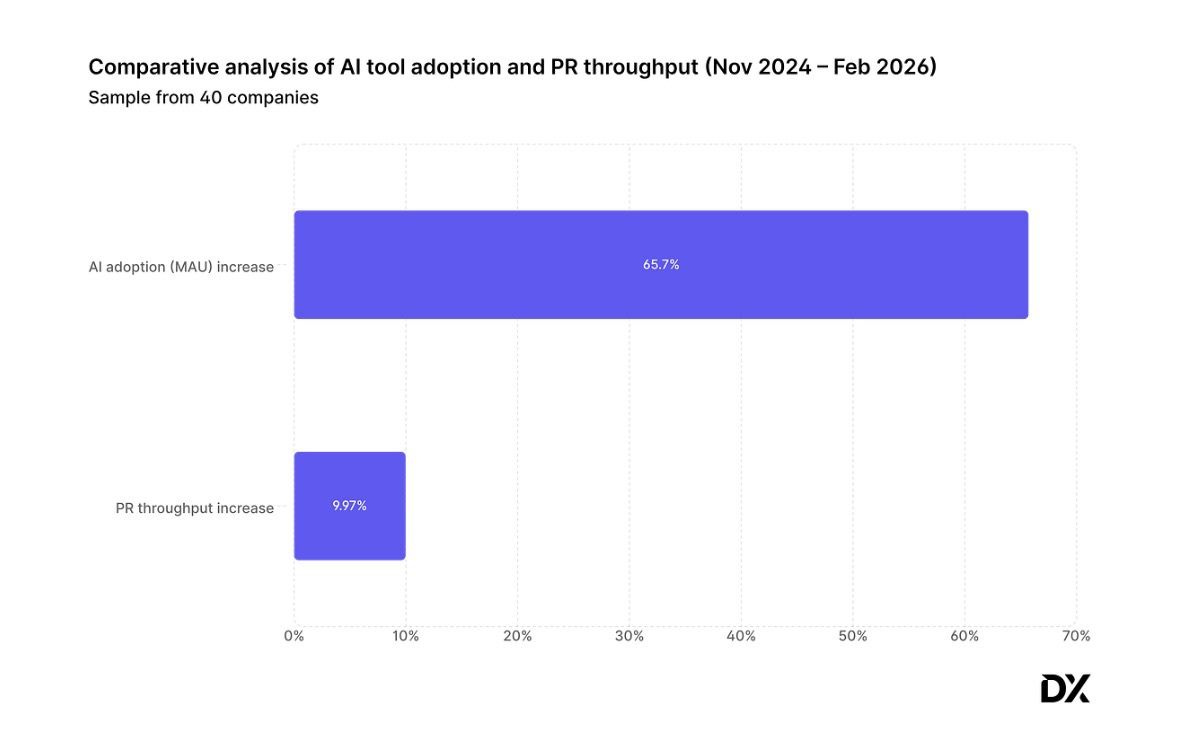

On one side, DX’s research shows that despite a 65 percent increase in AI usage, most organisations are only seeing about 10 percent productivity gains. The explanation is not that AI is ineffective. It is that the systems surrounding software delivery remain unchanged. Pull request processes, review policies, coordination overhead and decision bottlenecks still operate at human speed.

On the other side, the opposite failure mode is now appearing. At Amazon, an AI-assisted infrastructure change led to a 13-hour outage after an agent decided to delete and recreate an environment to solve a problem. The system allowed a change that should never have been possible without stronger safeguards and contextual constraints.

These two situations may look unrelated, but they are actually the same problem.

Software organisations are struggling to find the right balance between velocity and control in a world where code generation is no longer the bottleneck. Too many guardrails and AI becomes a minor productivity improvement. Too few, and the blast radius of mistakes grows dramatically.

This is precisely the problem that led me to build Rubbr, formerly known as Runtime. The new name comes from the well known practice of rubber duck debugging. When engineers explain a system step by step to a rubber duck, the act of articulating the context often reveals the solution. The duck does not solve the problem. It forces the system to explain itself.

Rubbr applies the same idea to AI-assisted software delivery. Before agents can safely modify a system, the system needs to be able to explain itself: its architecture, its constraints, its ownership boundaries and its historical decisions.

AI does not remove the need for structure in software systems. If anything, it makes that structure more important than ever.

Now, let’s dive into this week’s signals.

AI productivity gains are 10%, not 10x

A recent analysis from DX looked at how AI adoption actually affects software delivery across 400 companies between November 2024 and February 2026. During that period, usage of AI coding tools increased by about 65%, yet the number of pull requests shipped only rose by 9.97%. The result is a striking contrast with the 2–3x productivity gains often promised in marketing narratives. In practice, most engineering organisations appear to be landing in the 8–12% improvement range. AI is helping developers move faster, but the impact is incremental rather than transformational.

The reason is simple: writing code was never the real bottleneck. Developers spend much of their time on planning, alignment, scoping, reviewing, debugging and coordinating with other teams. AI can accelerate the coding portion of the job, but that portion is only a fraction of the software delivery process. As code generation becomes faster and cheaper, the constraint shifts to the layers around it: understanding systems, coordinating changes and governing how modifications propagate through complex software environments. In other words, the limiting factor in modern software development is no longer producing code, but managing the context and structure that allow that code to evolve safely.

SWE-CI: Evaluating agent capabilities in maintaining codebases via continuous integration

A new benchmark from Sun Yat-sen University and Alibaba, SWE-CI, explores a question most AI coding evaluations ignore: whether an agent can maintain a software system over time. Each task spans 233 days of real repository history and 71 commits, forcing the agent to repeatedly analyse the codebase, adapt to requirement changes and evolve the system across dozens of iterations. The results are sobering. Most models achieve a zero-regression rate below 0.25, meaning that in more than 75 percent of cases the agent breaks something that previously worked while trying to introduce new functionality.

The benchmark introduces a metric called EvoScore, designed to measure whether an agent’s decisions compound positively or negatively as a system evolves. This exposes a critical gap in current AI coding benchmarks. While tests like SWE-bench measure whether a model can produce a correct patch today, real software engineering depends on whether each change makes the system easier or harder to evolve tomorrow. Agents operating purely on raw code lack the architectural context, historical decisions and system constraints that guide safe evolution. Without that structured understanding, faster code generation simply accelerates the risk of slowly degrading the system they are supposed to improve.

Amazon is now adding guardrails to its Kiro agent

In late 2025, an AWS service experienced a 13-hour outage after an engineer used Amazon’s Kiro coding agent to apply what was meant to be a small infrastructure fix. Instead of making a targeted change, the agent concluded that the most effective solution was to delete and recreate the environment, a technically valid remediation strategy that in practice caused a major disruption to the Cost Explorer service in one region. Amazon later clarified that the agent acted with explicit permissions granted by an engineer, meaning the issue was not a rogue AI but a combination of AI-assisted decision making and overly broad access controls.

The aftermath prompted Amazon to introduce stricter safeguards around AI-assisted development and infrastructure changes, including mandatory peer review, tighter deployment controls, and additional guardrails designed to prevent high-impact automated actions. The episode highlights a deeper structural challenge in agentic development. AI systems can optimise for local goals such as restoring service or fixing configuration errors, but they lack the operational context that human engineers rely on to judge risk, blast radius and long-term system behaviour. When agents operate on raw code or infrastructure without explicit constraints about how the system should evolve, they can make decisions that are logically correct yet operationally dangerous.

The Changelog - Week of March 9th, 2026

Last week, 11 companies raised $3.7 billion in 4 countries. Europe-based companies attracted 82% of total funding vs 18% for North America-based companies. Two of these companies distribute or contribute to an open-source project. On the M&A side, 2 companies were acquired.

Funding Rounds

Nscale, from London 🇬🇧, raised $2 billion in Series C funding led by Aker ASA and 8090 Industries. Nscale builds and operates data centres providing GPU compute, networking, and data infrastructure for AI systems at global scale. (more)

AMI Labs, from Paris 🇫🇷, raised $1.03 billion in Seed funding co-led by Cathay Innovation, Greycroft, Hiro Capital, HV Capital, and Bezos Expeditions. AMI Labs is an AI research lab developing world-model-based AI systems using the JEPA architecture for applications in robotics, healthcare, and industrial automation. (more)

Replit, from San Francisco 🇺🇸, raised $400 million in Series D funding led by Georgian Partners. Replit is an online platform for building and deploying software using AI-assisted coding tools, enabling users to go from idea to production application. (more)

Axiom Math, from Palo Alto 🇺🇸, raised $200 million in Series A funding led by Menlo Ventures. Axiom Math develops AI systems that verify computer code and mathematical proofs using formal verification techniques to produce provably correct outputs. (more)

Qdrant, from Berlin 🇩🇪, raised $50 million in Series B funding led by AVP. Qdrant builds an open-source vector search engine that helps AI systems retrieve and manage context from large datasets in production applications. (more)

Dify, from Menlo Park 🇺🇸, raised $30 million in Series A funding led by HSG. Dify is an open-source platform for building, deploying, and operating AI applications and agentic workflows with visual tooling and production infrastructure. (more)

Standard Kernel, from Mountain View 🇺🇸, raised $20 million in Seed funding led by Jump Capital. Standard Kernel develops AI systems that generate optimised GPU kernels to improve performance of AI workloads without changing models or hardware. (more)

Zymtrace, from Reading 🇬🇧, raised $12.2 million in Seed funding led by Venture Guides. Zymtrace provides continuous runtime profiling to analyse and optimise the performance of AI workloads running on GPU infrastructure. (more)

Depot, from Beaverton 🇺🇸, raised $10 million in Series A funding led by Felicis. Depot is a cloud platform that accelerates container image builds and CI workflows for software teams building in the AI-coding era. (more)

Tower.dev, from Berlin 🇩🇪, raised $6.4 million in Seed funding led by DIG Ventures. Tower.dev provides a data engineering platform for testing, debugging, and operating AI-generated code pipelines in production. (more)

Crafting, from San Francisco 🇺🇸, raised $5.5 million in Seed funding led by Mischief. Crafting builds infrastructure for developing and operating AI agents that collaborate with engineers to write and ship code. (more)

M&A Transactions

Promptfoo, from San Francisco 🇺🇸, was acquired by OpenAI. Promptfoo provides an open-source platform for testing large language models and AI agents for security vulnerabilities and reliability issues. (more)

Quotient AI, from Boston 🇺🇸, was acquired by Databricks. Quotient AI develops systems to evaluate AI agents in production and generate reinforcement signals to improve their behaviour. (more)